Time Flies. How to Keep Up With AI

AI tools evolve fast. What looked cutting-edge six months ago may already be outdated. Here’s how to avoid turning old AI workflows into bad habits.

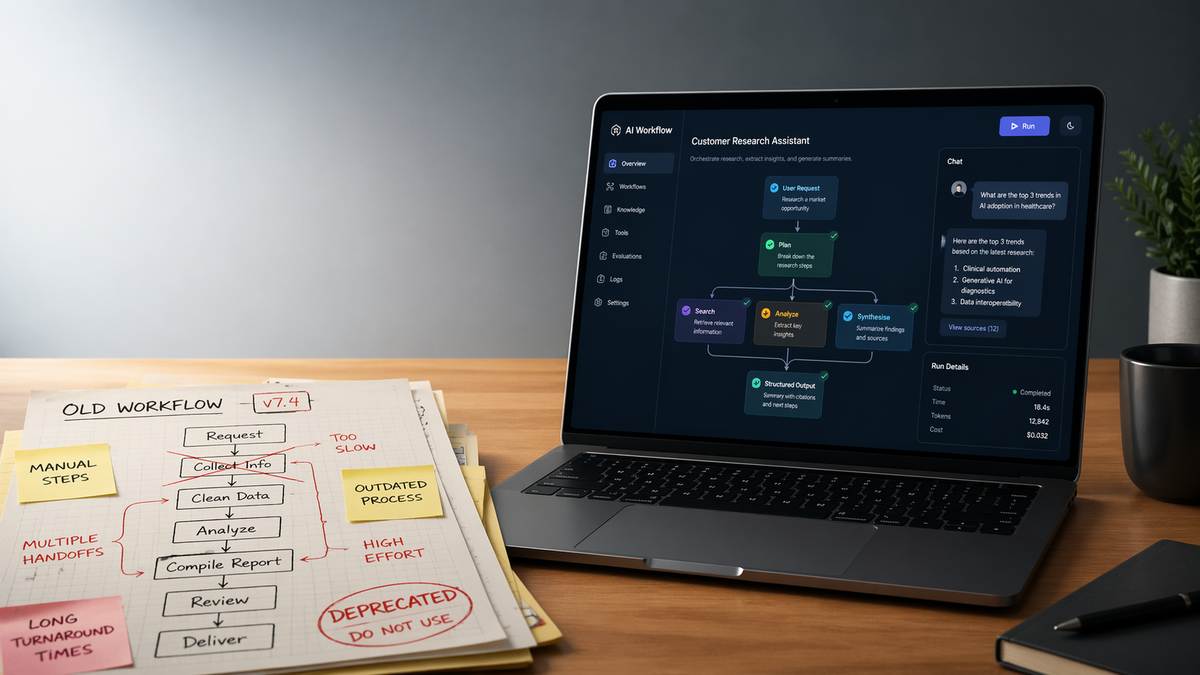

Last year’s advanced workflow can become this year’s bad habit.

by Jana Diamond, PMP

AI moves fast. Everybody says that. And for once, the hype machine is telling the truth.

What they rarely say is this: sometimes the fastest way to fall behind is to keep doing what made you look smart six months ago.

I’ve watched talented people keep using yesterday’s advanced AI workflow like it’s still cutting edge, even after the models, tools, and tradeoffs changed underneath them. The result is weirdly common: they’re not following current best practice. They’re preserving an old workaround and calling it expertise.

That’s one of the hardest parts of keeping up with AI. The field moves fast enough that a method can go from clever to clunky before the team even notices. And if the person using it is “the AI person,” everybody else may assume it still must be right.

It may have been right.

Last year.

Or last month.

There are two easy ways to fall behind in AI, and people manage to hit both with impressive consistency.

The first is obvious: chasing every shiny new thing like a Labrador in a tennis ball factory. New model, new framework, new benchmark, new startup, new LinkedIn prophet declaring everything changed by lunchtime. That kind of panic-driven “staying current” usually just turns into noise, churn, and a lot of architecture decisions nobody can explain six weeks later.

The second is sneakier, and more common in real work. Somebody figured out a smart way to handle a real limitation six months ago, and now they’re still using it like it’s the official playbook. The problem is AI changes fast enough that a clever workaround can quietly become unnecessary complexity. The game changed. Their playbook didn’t.

That’s the part people miss when they talk about “experience.” In most domains, experience compounds. In AI, some of it does. The fundamentals matter. Judgment matters. Knowing how to test, verify, scope, and think clearly still matters. A lot. But a surprising amount of so-called AI expertise is really just a collection of hacks built around old limitations, old model behavior, or old tooling assumptions. If you never go back and question those assumptions, yesterday’s sophistication turns into today’s drag.

Keep the fundamentals. Re-test the hacks.

This is where people get themselves in trouble. They throw out the wrong things.

The fundamentals usually age just fine. Clear thinking. Good scoping. Strong evaluation. Knowing what the system is supposed to do, what it actually did, and who is still responsible when it gets it wrong. None of that went out of style because a new model dropped on Tuesday. If anything, those matter more now than they did when the tools were weaker.

The hacks are a different story. A lot of what passed for “advanced AI technique” a year ago was really just a workaround for missing capability, weak reliability, or tooling that hadn’t matured yet. People built elaborate prompt scaffolding because the models needed hand-holding. They over-engineered retrieval because the models were worse at disambiguation. They split tasks into awkward little pieces because context windows were tighter, tool use was shakier, or structured output was less reliable. Some of that was smart at the time. Some of it was necessary. Some of it now just defies belief.

That’s the part worth checking. Not every clever trick deserves to become permanent architecture. If the model got better, the tools got better, and your method stayed exactly the same, there’s a decent chance you’re preserving complexity that no longer earns its keep.

A workaround is not a principle.

Don’t marry it.

Keeping up with AI does not mean chasing every launch, every benchmark, or every shiny new toy somebody posts before lunch.

It also does not mean clinging to the workaround that made you look smart six months ago.

The tools will keep changing. The names will keep changing. The demos will keep getting shinier.

The real job is simpler than that. Keep the judgment. Keep the fundamentals. And every once in a while, take a hard look at your “best practice” and ask whether it’s still best - or just familiar.

Because in AI, sometimes the fastest way to fall behind is to keep doing what used to work.

Originally published on Protovate.AI

Protovate builds practical AI-powered software for complex, real-world environments. Led by Brian Pollack and a global team with more than 30 years of experience, Protovate helps organizations innovate responsibly, improve efficiency, and turn emerging technology into solutions that deliver measurable impact.

Over the decades, the Protovate team has worked with organizations including NASA, Johnson & Johnson, Microsoft, Walmart, Covidien, Singtel, LG, Yahoo, and Lowe’s.

About the Author