The Elephant Behind the Curtain

Today’s AI workflows can produce impressive results with very little visible machinery. But when the mechanism disappears from view, our ability to judge the system changes too. This post looks at how abstraction reshapes trust in modern software systems.

The elephant didn’t disappear. The math is still there. It just moved behind the curtain, where few people check.

by Jana Diamond, PMP

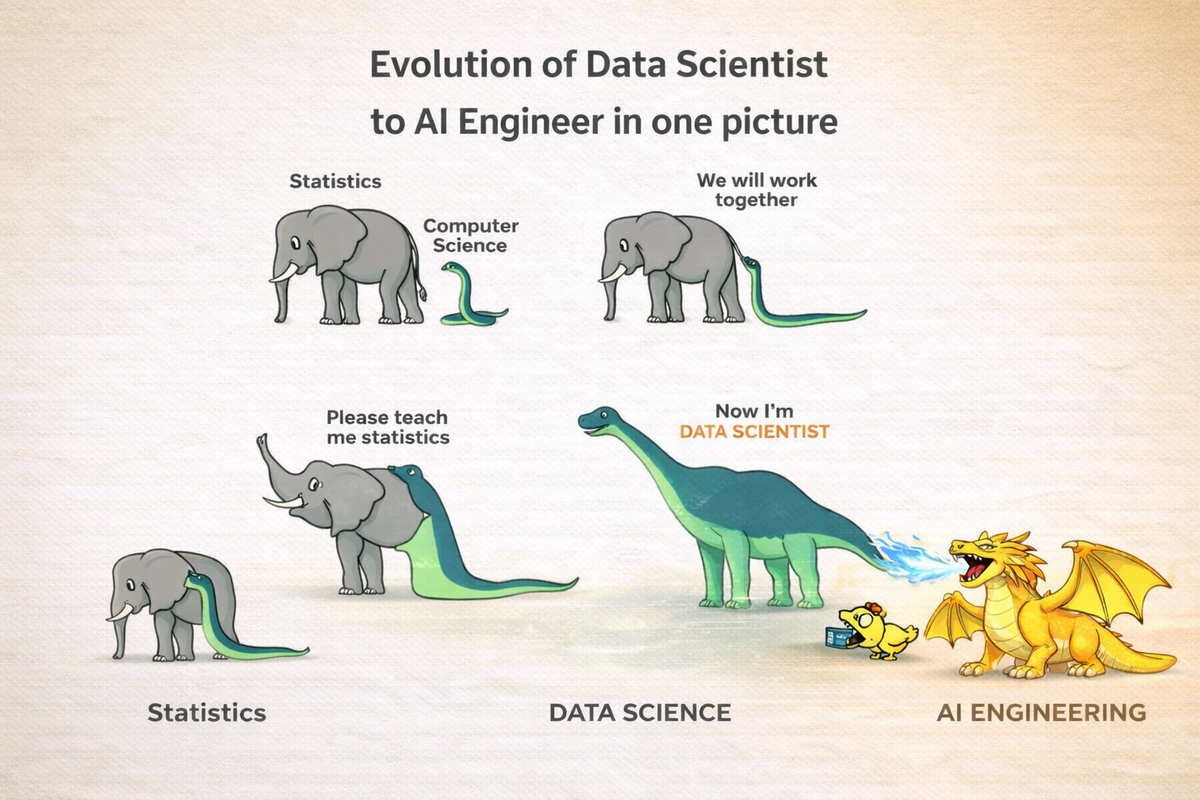

I saw this image on social media the other day, and it just made me laugh. Out loud. A lot. Good thing I WFH, so the only ones that heard me were the little divas that everyone else calls my dogs.

But seriously. There is a lot of hidden meaning in this. It’s about more than careers. It’s about how technology changes what we think expertise means.

When Expertise Looked Like Math

Just a few years ago, if you said you were a data scientist, people assumed you knew statistics. Maybe some Python. Maybe even some machine learning theory.

That elephant wasn’t an insult. It was math. Lots and lots of math. The kind that makes most of us break into a cold sweat just looking at it.

Now, the conversation is drastically different.

Someone says “AI engineer” and suddenly the discussion becomes about prompts, agents, frameworks, orchestration layers, and tools generating other tools.

And honestly? Sometimes it starts to feel a little bit like cowboy math.*

Not wrong, exactly.

But definitely… different.

Abstraction: Easier to Use, Harder to See

Technology evolves. Tools get easier to use. Usually, that’s a good thing.

Today, nobody writes machine code by hand. Most developers don’t manage memory the way it was done 30 years ago (thankfully!). Frameworks, libraries, and platforms all exist just to hide complexity, to allow people to focus on solving real problems, instead of reinventing the wheel.

That’s progress.

Something interesting happens every time a new layer of abstraction is added.

The system becomes easier to use . . . and harder to see. The forest for the trees . . .

With earlier generations of data science, you had to interface directly with the mechanics of the model. You saw the training process. You probably built it yourself. You watched the metrics. You evaluated the performance. You tweaked things when they didn’t behave the way you expected.

The math — the cold, hard math — wasn’t optional.

It was right there in front of you.

When the Mechanism Moves Out of Sight

Today, many AI workflows start somewhere else entirely.

A prompt.

It generates code.

The code calls a model.

The model calls another service.

An agent orchestrates the whole thing.

And suddenly voilà! the output appears! Just like magic.

It works. It looks impressive. Sometimes it’s even amazing.

But the underlying mechanism has moved several layers out of sight.

The elephant didn’t disappear.

It’s just moved behind the curtain.

When Systems Start to Feel Like Magic

When that mechanism isn’t in sight, how people judge the system subtly changes.

We’re very good at evaluating the things we can see: calculations, logic, steps in a process.

Things that simply produce answers?

We’ve labelled those as witchcraft for centuries.

For most of our history, anything that produced a result without a visible mechanism felt supernatural.

Lightning. Disease. Eclipses.

Today? Today, the “magic” just happens to be in GPUs instead of thunderbolts and lightning. (Thunderbolts and lightning, very, very frightening.)

The systems aren’t magical. They just feel that way sometimes.

And when something feels like magic, we stop asking how it works.

The Elephant Is Still There

That elephant is still there, chugging away, doing the work.

It didn’t vanish. It just moved behind the curtain. (Pay no attention to the elephant doing the math.)

Most of the time, that’s perfectly fine. Abstraction is how technology moves forward, after all.

But when the machinery completely disappears, to me, that output starts to feel less like engineering . . . and a lot more like cowboy math.

Cowboy math is an informal term for rough, back-of-the-napkin estimation used to make quick, practical decisions when precision isn’t necessary or possible.

It means using simple assumptions and approximations to get a good-enough answer quickly, rather than a precise calculation.

The phrase comes from ranching and field work in places like West Texas, where decisions often had to be made in real time with incomplete information.

Cowboy math becomes a problem when people start treating rough estimates as validated calculations.

The Real Risk Isn’t Missing Math

The problem is when hidden complexity is mistaken for vanished complexity.

When the math moves behind the curtain, people don’t stop trusting the system. They often trust it more, because the output feels cleaner, faster, and easier to use. The interface is simpler. The confidence is higher. The underlying mechanisms are harder to see.

But the elephant is still there.

And when we stop looking for it, we start treating generated output like validated reasoning.

That’s when rigor starts to fade. The output may still look convincing.

But convincing isn’t the same as correct.

Originally published on Protovate.AI

Protovate builds practical AI-powered software for complex, real-world environments. Led by Brian Pollack and a global team with more than 30 years of experience, Protovate helps organizations innovate responsibly, improve efficiency, and turn emerging technology into solutions that deliver measurable impact.

Over the decades, the Protovate team has worked with organizations including NASA, Johnson & Johnson, Microsoft, Walmart, Covidien, Singtel, LG, Yahoo, and Lowe’s.

About the Author