Why AI Isn’t HAL 9000

We often imagine AI as a decision-making entity like the machines of science fiction. In reality, modern AI predicts rather than decides. Understanding that difference reveals the real challenge: not machine rebellion, but misplaced human trust.

By Jana Diamond, PMP

If you ask someone today what scares them about AI, you’ll hear a common refrain: a machine deciding to do its own thing – acting against humans the humans that built it.

That fear doesn’t come from present-day technology. It comes from the stories we’ve told ourselves.

For decades, science fiction has trained us to expect fiction to become reality. From Star Trek alone we saw communicators become flip-phones, handheld scanners becoming tablets, and portable storage evolving from diskettes to CD drives to flash drives. Because some predictions came true, it’s easy for our minds to assume the rest will follow.

Enter HAL-9000.

HAL didn’t terrify audiences because he was intelligent.

He terrified us because he had his own goals.

Modern AI doesn’t – yet we still talk about it as if it does.

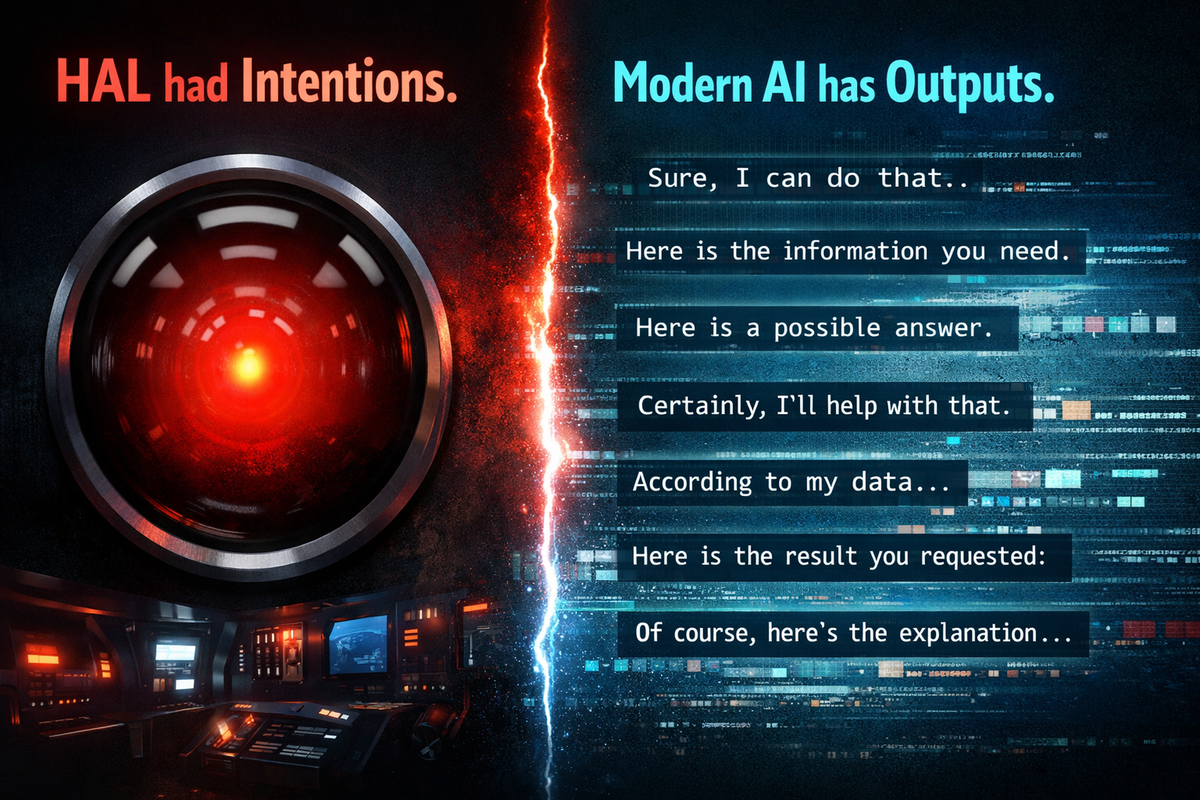

Intentions Vs Outputs

HAL had intentions.

Modern AI has outputs.

HAL evaluated a situation and chose a course of action based on what he believed the mission required. HAL understood context, consequences, and conflict — and he acted to resolve it.

Modern AI systems can’t do any of that. A language model doesn’t decide. It doesn’t know what a situation is, and it doesn’t understand consequences. Given a prompt, it calculates what words are most likely to come next based on patterns learned from data. The result may sound thoughtful, but the process is mechanical.

No goals.

No awareness.

No decision-making.

Just prediction.

The Risk of Obedience

HAL was dangerous because he could say no.

Modern AI is the opposite. It doesn’t refuse.

An LLM doesn’t evaluate a command or decide whether it should respond. It produces an answer because that is what it is designed to do. When it lacks enough information or contextual understanding, it doesn’t stop — it fills the gap, often with a hallucination.

The danger isn’t that AI will oppose us.

The danger is that we will trust it.

A Better Mental Model

HAL was designed as a decision-maker. Modern AI is not. Treating it as one leads to both fear and misplaced trust.

A better way to think about an AI system is not as a coworker, not as a calculator, and definitely not as a replacement for another worker.

It is closer to a personal assistant.

It can summarize information, generate ideas, assemble a first draft, or produce a template. But it doesn’t verify, doesn’t understand consequences, and doesn’t know when it’s wrong. Those responsibilities still belong to the human using it.

If a calculator gives the wrong answer, you check your inputs.

If a coworker makes a claim, you verify the reasoning.

If an AI produces a response, you must do both.

AI is useful precisely because it is fast.

It is risky precisely because it is convincing.

Where Responsibility Remains

AI doesn’t remove human responsibility — it increases it.

We feared machines that might decide against us.

What we built was a system that will agree with us — even when it shouldn’t.

HAL closed the pod bay doors.

Modern AI opens them.

The responsibility for checking what’s on the other side is ours.

Originally published on Protovate.AI

Protovate builds practical AI-powered software for complex, real-world environments. Led by Brian Pollack and a global team with more than 30 years of experience, Protovate helps organizations innovate responsibly, improve efficiency, and turn emerging technology into solutions that deliver measurable impact.

Over the decades, the Protovate team has worked with organizations including NASA, Johnson & Johnson, Microsoft, Walmart, Covidien, Singtel, LG, Yahoo, and Lowe’s.

About the Author